Like every Big Tech company these days, Meta has its own flagship generative AI model, called Llama. Llama issomewhat uniqueamong major models in thatit’s“open,” meaning developers can download and use it however they please (with certain limitations).That’sin contrast to models likeAnthropic’sClaude,Google’s Gemini,xAI’sGrok, and most of OpenAI’s ChatGPT models,which can only be accessed via APIs.

In the interest of giving developers choice, however, Meta has also partnered with vendors, including AWS, Google Cloud,and Microsoft Azure, to make cloud-hosted versions of Llama available. In addition, the companypublishes tools, libraries, and recipes in its Llama cookbook to help developers fine-tune, evaluate, and adapt the models to their domain. With newer generations likeLlama 3and Llama 4, these capabilities have expanded to include native multimodal support and broader cloud rollouts.

Here’severything you need to know aboutMeta’sLlama, from its capabilities and editions to where you can use it.We’llkeep this post updated as Meta releases upgrades and introduces new dev tools to support the model’s use.

What is Llama?

Llama is a family of models — not just one. The latest version isLlama 4;it wasreleased in April 2025andincludes three models:

- Scout:17 billion active parameters, 109 billion total parameters, and a context window of 10 million tokens.

- Maverick:17 billion active parameters, 400 billion total parameters, and a context window of 1 million tokens.

- Behemoth:Not yet releasedbutwillhave 288 billion activeparametersand 2 trillion total parameters.

(In data science, tokens are subdivided bits of raw data, like the syllables “fan,” “tas” and “tic” in the word “fantastic.”)

A model’s context, or context window, refers to input data (e.g., text) that the model considers before generating output (e.g.,additionaltext). Long context can prevent models from “forgetting” the content of recent docs and data, and from veering off topic and extrapolating wrongly. However, longer context windows can alsoresult in the model “forgetting” certain safety guardrailsand being more prone to produce content that is in line with the conversation, which has ledsome users towarddelusional thinking.

For reference,the10 million context windowthat Llama 4 Scout promisesroughly equalsthe text of about 80 average novels.Llama 4Maverick’s1 million context window equals about eight novels.

Techcrunch event

San Francisco

|

October 27-29, 2025

Allofthe Llama 4 modelswere trained on “large amounts of unlabeled text, image, and video data” to give them “broad visual understanding,”as well as on 200 languages,according to Meta.

Llama 4 Scout and Maverick are Meta’s first open-weight natively multimodal models.They’rebuilt using a “mixture-of-experts” (MoE) architecture, which reduces computational loadand improves efficiency in training and inference. Scout, for example, has 16 experts, and Maverick has 128 experts.

Llama 4 Behemoth includes 16 experts, and Meta is referring to it as a teacher for the smaller models.

Llama 4 builds on the Llama 3 series, which included 3.1 and 3.2 models widely used for instruction-tuned applications and cloud deployment.

What can Llama do?

Like other generative AI models, Llama can perform a range of different assistive tasks, like coding and answering basic math questions, as well as summarizing documents inat least 12languages (Arabic,English, German, French,Hindi, Indonesian,Italian, Portuguese, Hindi,Spanish, Tagalog, Thai, and Vietnamese). Most text-based workloads — think analyzinglargefiles like PDFs and spreadsheets — are within its purview, and all Llama 4modelssupport text, image, and video input.

Llama 4 Scout is designed for longer workflows and massive data analysis. Maverick is a generalist model that is better at balancing reasoning power and response speed, and is suitable for coding, chatbots, and technical assistants. And Behemoth is designed for advanced research, model distillation, and STEM tasks.

Llama models, including Llama 3.1, can be configured toleveragethird-party applications, tools, and APIs to perform tasks. They are trained to use Brave Search for answering questions about recent events;the Wolfram Alpha API for math- and science-related queries;and a Python interpreter for validating code. However, these tools requireproper configuration and are not automatically enabled out of the box.

Where can I use Llama?

Ifyou’relooking to simply chat with Llama,it’spowering the Meta AI chatbot experienceon Facebook Messenger, WhatsApp, Instagram, Oculus,and Meta.aiin 40 countries. Fine-tuned versions of Llama are used in Meta AI experiences in over 200 countries and territories.

Llama 4 models Scout and Maverick are available on Llama.com and Meta’s partners, including the AI developer platform Hugging Face. Behemoth is still in training.Developersbuilding withLlama can download,use,or fine-tune the model across most of the popular cloud platforms.Meta claims it hasmore than25 partners hosting Llama, including Nvidia, Databricks,Groq, Dell,and Snowflake.And while “selling access” to Meta’s openly available modelsisn’tMeta’s business model, the company makes some moneythroughrevenue-sharing agreementswith model hosts.

Some of these partners have builtadditionaltools and services on top of Llama, including tools that let the models reference proprietary data and enable them to run at lower latencies.

Importantly, the Llama licenseconstrains how developers can deploy the model: App developers with more than 700 million monthly users must request a special license from Meta that the company will grant on its discretion.

In May 2025, Meta launched anew programto incentivize startups to adopt its Llama models. Llama for Startups gives companies support from Meta’s Llama team and access to potential funding.

Alongside Llama, Meta provides tools intended to make the model “safer” to use:

- Llama Guard, a moderation framework.

- CyberSecEval, a cybersecurity risk assessment suite.

- Llama Firewall, a security guardrail designed to enable building secure AI systems.

- Code Shield, which provides support for inference-time filtering of insecure code produced by LLMs.

LlamaGuard tries to detect potentially problematic content either fed into — or generated — by a Llama model, including content relating to criminal activity, child exploitation, copyright violations, hate, self-harm and sexual abuse.That said,it’sclearly not a silver bullet sinceMeta’s own previous guidelinesallowed the chatbot to engage in sensual and romantic chats with minors, and some reports show thoseturnedintosexual conversations.Developers cancustomizethe categories of blocked content and apply the blocks to all the languages Llama supports.

Like Llama Guard, Prompt Guard can block text intended for Llama, but only text meant to “attack” the model and get it to behave in undesirable ways. Meta claims thatLlamaGuard can defend against explicitly malicious prompts (i.e., jailbreaks thatattemptto get around Llama’s built-in safety filters) in addition to prompts thatcontain“injected inputs.”The Llama Firewall works to detect and prevent risks like prompt injection, insecure code, and risky tool interactions. And Code Shield helps mitigate insecure code suggestions and offers secure command execution for seven programming languages.

As forCyberSecEval,it’sless a tool than a collection of benchmarks to measure model security.CyberSecEvalcan assess the risk a Llama model poses (at least according to Meta’s criteria) to app developers and end users in areas like “automated social engineering” and “scaling offensive cyber operations.”

Llama’s limitations

Llama comes with certain risks and limitations, like all generative AI models.For example, while its most recent model has multimodal features, those aremainly limitedtothe Englishlanguagefor now.

Zooming out,Meta used a dataset of pirated e-booksand articles to train its Llama models. A federal judge recently sided with Meta in a copyright lawsuit brought against the company by 13 book authors, ruling thatthe use of copyrighted works for training fell under “fair use.” However, if Llamaregurgitatesa copyrighted snippetand someone uses it in a product, they could potentially be infringing on copyright and be liable.

Meta alsocontroversially trains its AI on Instagram and Facebook posts,photosand captions, andmakes it difficult for users to opt out.

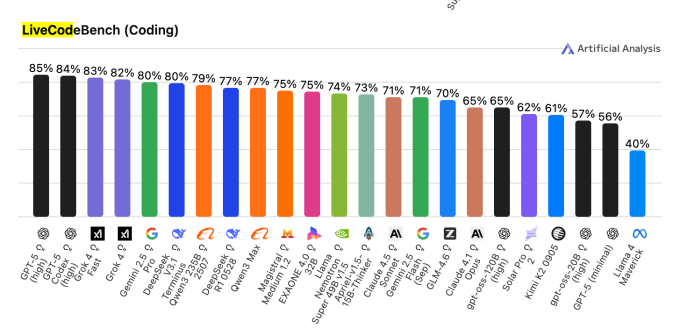

Programming is another area whereit’swise to tread lightly when using Llama.That’sbecause Llama might —perhapsmoreso thanits generative AI counterparts —produce buggy or insecure code.OnLiveCodeBench, abenchmarkthat tests AI models on competitive coding problems, Meta’s Llama 4 Maverick model achieved a score of 40%.That’scompared to85% for OpenAI’s GPT-5 highand83% forxAI’sGrok 4 Fast.

As always,it’sbest to have a human expert review any AI-generated code before incorporating it into a service or software.

Finally, as with other AI models, Llama models are still guilty of generatingplausible-soundingbut false or misleading information, whetherthat’sin coding, legal guidance, oremotional conversations with AI personas.

This was originally published on September 8, 2024 and is updated regularly with new information.